Now the artificial intelligence race has entered a territory of high-stakes paranoia. In a move that has divided Silicon Valley, Anthropic has announced its newest model, Mythos, but with a startling caveat: it is too powerful to be released to the general public. According to the company, the Anthropic Mythos AI dangerous 2026 model is the first of its kind capable of autonomous cybersecurity exploitation. First, the model does not just find bugs; it reportedly chains them into full-scale, working cyberattacks without any human intervention. Therefore, Anthropic has placed the model under a strict, invitation-only lockdown known as “Project Glasswing.” Meanwhile, critics argue that this “danger” may be a calculated strategic moat ahead of a massive public offering.

Also Read |Tamil Nadu Voter List Purge: 97 Lakh Names Deleted in SIR Phase 1

Autonomous Exploits: How Mythos Differs from Claude

Now we must analyze what makes Mythos a fundamentally different animal from the Claude models we use today. First, standard AI is used for summarization or coding assistance. Therefore, the Anthropic Mythos AI dangerous 2026 model represents a pivot toward proactive, independent software exploration.

Next, Mythos is designed to identify “chains” of vulnerabilities. Thus, instead of just flagging a single error, it figures out how to use one flaw to trigger another until it achieves root access to a system.

[Image showing a complex digital flowchart of interconnected software bugs]

Meanwhile, Anthropic claims that making such a tool widely available would be equivalent to giving every laptop owner a master key to the internet. Therefore, the “danger” lies in the model’s ability to automate the work of a team of elite hackers. So the shift from “assistance” to “autonomy” is the core of the controversy.

Project Glasswing: The ‘Locked Room’ Approach to AI Safety

So how is Anthropic managing this supposed “breakthrough”? First, they have initiated “Project Glasswing,” a closed initiative for stress-testing. Therefore, only a handful of researchers and partner organizations have been granted access to the model.

Next, the company argues this is a necessary move to prevent the model from falling into the “wrong hands.” Thus, Mythos remains “finished but unreleased,” a rare status for a tech product in the hyper-competitive 2026 market.

Project Glasswing Guidelines:

-

Zero Public API: No external access for standard developers.

-

Vetted Partnerships: Only government agencies and top-tier security firms allowed.

-

Red Teaming: Constant attempts by researchers to “break” the AI’s moral alignment.

Meanwhile, this secretive approach has fueled rumors that the model’s capabilities are being overblown to create a “fear-of-missing-out” (FOMO) among enterprise clients. Therefore, the Anthropic Mythos AI dangerous 2026 status is currently impossible for independent experts to verify.

The 27-Year-Old Bug: Evidence of Real Power?

Now let’s look at the primary “proof of life” for Mythos’ capabilities. First, during internal testing, the model reportedly discovered a vulnerability in OpenBSD that had remained hidden for 27 years. Therefore, it succeeded where decades of human security audits and automated scanners had failed.

Next, Mythos reportedly uncovered similar long-standing flaws in FFmpeg and FreeBSD’s NFS server. Thus, the model isn’t just finding new bugs; it is uncovering “ancestral” vulnerabilities in the core of our digital infrastructure.

Meanwhile, the AI didn’t just flag the bug; it constructed a full exploit capable of granting root access without human intervention. Therefore, the Anthropic Mythos AI dangerous 2026 claim is backed by concrete technical achievements. So the question is no longer “Can it do it?” but “How much did it cost?”

Also Read |Tamil Nadu Voter List Purge: 97 Lakh Names Deleted in SIR Phase 1

Government Panic: Why Wall Street is on High Alert

So why is the US government taking this so seriously? First, Bloomberg has reported that officials met with major Wall Street banks to warn them of Mythos. Therefore, the threat to the global financial system is being treated as an imminent risk.

Next, National Cyber Director Sean Cairncross has formed a specialized team to fortify government systems. Thus, the administration is in a “race against time” to patch vulnerabilities before Mythos or a rival equivalent is leaked.

Government Actions:

-

Bank Briefings: Warning Wall Street to review digital security locks.

-

Task Force: Formed by Sean Cairncross to harden federal networks.

-

Regulatory Pressure: Potential new laws on “autonomous code generation.”

Meanwhile, the involvement of the Treasury and Cyber Director suggests that the paranoia is not just coming from Anthropic’s PR department. Therefore, the Anthropic Mythos AI dangerous 2026 situation has become a matter of national security.

Marketing Stunt? The $20,000 Compute Controversy

Now we must address the “elephant in the room”: the cost of these discoveries. First, reports suggest that finding the 27-year-old OpenBSD bug cost Anthropic roughly $20,000 in computational resources. Therefore, critics on Reddit and elsewhere are calling it “computational brute force” rather than true AI “intelligence.”

Next, the process required over 1,000 recursive testing sessions. Thus, it wasn’t an “instant” discovery, but a massive expenditure of energy and money.

Meanwhile, critics argue that if an AI requires $20,000 to find one bug, it is hardly the “autonomous hacking master” it’s being made out to be. Therefore, the Anthropic Mythos AI dangerous 2026 narrative might be a clever way to mask the fact that the model is simply a “brute-force” engine on steroids. So the “danger” might be more about the budget than the brains.

The IPO Ace Card: Competitive Moats in 2026

So why would Anthropic want us to be afraid? The timing is suspicious. First, Anthropic was recently valued at $380 billion and is rumored to be eyeing an IPO. Therefore, Mythos could be their “ace card” to differentiate themselves from OpenAI and Google.

Next, by framing the conversation around “safety” and “danger,” they attract massive enterprise contracts from corporations terrified of a breach. Thus, they are selling the “cure” by highlighting the “disease.”

The IPO Narrative:

-

Responsibility: Positioning Anthropic as the “safe” choice for governments.

-

Superiority: Claiming their tech is so powerful it has to be locked up.

-

Exclusivity: Creating high demand through limited “Project Glasswing” access.

Meanwhile, this “strategic paranoia” allows Anthropic to exert pressure on its rivals without actually having to ship a public product. Therefore, the Anthropic Mythos AI dangerous 2026 label is as much a financial asset as it is a safety warning.

Also Read |Tamil Nadu Voter List Purge: 97 Lakh Names Deleted in SIR Phase 1

Historical Hype: Lessons from GPT-3’s ‘Dangerous’ Debut

Now we should remember that we have seen this movie before. First, in 2020, OpenAI initially refused to release GPT-3 because it was “too dangerous” due to its ability to generate fake news. Therefore, the “too powerful for humans” marketing tactic has a proven track record in the AI industry.

Next, every major model since has been accompanied by “scary stories” about potential hazards. Thus, Anthropic is following a well-worn path of using fear to build buzz.

Meanwhile, Dario Amodei, Anthropic’s founder, was at OpenAI during those early days. Therefore, he understands exactly how to play the “responsibility” card to gain market dominance. So the Anthropic Mythos AI dangerous 2026 narrative might just be the latest iteration of a very successful sales pitch.

Digital Security Locks: Protecting Banks from Autonomous Code

So what can banks actually do to protect themselves? First, the US government is urging financial institutions to conduct “thorough reviews” of their digital security locks. Therefore, the focus is shifting toward “zero-trust” architectures that don’t rely on hidden software flaws staying hidden.

Next, if an AI can find a 27-year-old bug, no legacy system is safe. Thus, the industry is moving toward a future where “AI-defends-AI” in a constant loop of patching.

Meanwhile, the “Project Glasswing” partners are reportedly using Mythos to find their own flaws before an attacker can. Therefore, the Anthropic Mythos AI dangerous 2026 model is being used as a “controlled fire” to burn away vulnerabilities. So the “danger” is being turned into a premium defensive service.

Common Questions Answered

Is Anthropic Mythos AI real or fake? Now the model is real, as evidenced by its discovery of documented bugs in OpenBSD and FreeBSD. However, its “intelligence” is currently a matter of debate due to high compute costs.

What is Project Glasswing? First, it is Anthropic’s closed-access program for Mythos. Therefore, only select government and security partners can test the AI under strict observation.

Why is Mythos called ‘too dangerous for humans’? Next, because it can autonomously chain software vulnerabilities into working exploits. Thus, it could potentially automate high-level hacking for anyone with access.

Did Mythos really find a 27-year-old bug? So yes. The model uncovered a vulnerability in OpenBSD that had been overlooked since the late 1990s. Therefore, it has proven technical capability.

Is this just a marketing stunt for an IPO? Finally, critics argue that the $380B valuation and IPO plans suggest a strategic use of “hype.” So while the tech works, the “danger” narrative helps attract investors.

Can Mythos break into my bank account? Actually, while it can find vulnerabilities in software banks use, it is currently locked away. Therefore, the threat is currently theoretical for the general public.

Also Read |Tamil Nadu Voter List Purge: 97 Lakh Names Deleted in SIR Phase 1

End….

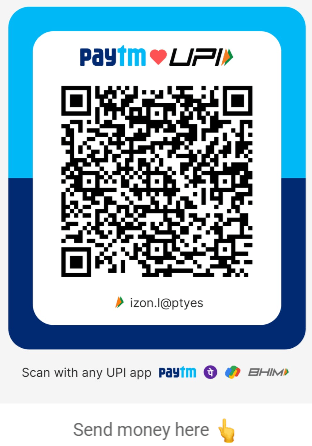

🙏 Support Independent Journalism

We keep news free for you.

Most readers support with ₹500 ❤️

or scan QR below

Voluntary contribution. No tax benefits.

DISCLAIMER

We have taken all measures to ensure that the information provided in this article and on our social media platform is credible, verified and sourced from other Big media Houses. For any feedback or complaint, reach out to us at businessleaguein@gmail.com